I was conducting this experiment about variance of sample. All I needed was a program that does a lot of calculations. So I did that and it took a long time. I didn’t even wait till the end since I knew that after 2 hours it will take at least as much as it took.

Task manager showed that only single core was being utilized. Fuck that shit! Why did I bought powerful machine if I couldn’t use it to it’s full potential?

But here comes asynchronous programming – my saviour.

My background

I have almost no experience with creating threaded programs. You see, all I did was PHP. It is special because every time a request comes, it is dealt with by a new process. So, if server handles 10000 requests, then it spawns 10000 processes. I had nothing to do with that, upper stack took care of this. My software could fail in some situations, but I’d still get others working without any problem.

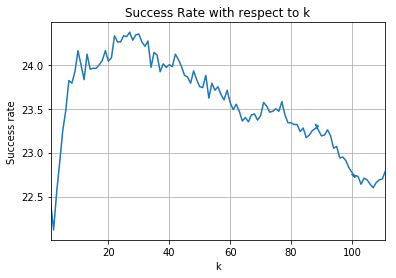

However, right now I needed a one run of a program, that took a very long time and it took a lot of computations. Seeing picture above, my approach, clearly, was not the best way to do so. I know a thing or two about asynchronous programming, but I don’t have much experience with it. All I did was some tasks for university that I did not understand fully.

Yep, my approach to learning sucked ass!

First attempt: threading

I decided that threading could be a way to go. One of the possible outcomes is success, so I definitely had to try it. You can check exact code that I wrote, but I want to focus on threading here. Check the idea.

Code

import threading

import time

k = 1000 # Amount of tests

# Generates populations, takes a sample, calculates statistics

def run_experiment(callback):

pass

# Saves data for further processing

def save_data(arg):

pass

for i in range(k):

t = threading.Thread(target=run_experiment, args=(save_data, ))

t.start()

# Wait until all threads are donezo

while threading.active_count() > 1:

time.sleep(3)

The fact that I used callback to save data proves some JavaScript influence! And I noticed that only later. 🙂

Results

When I wrote this, I felt like a super developer!

But when I launched program, my feelings and emotions had changed. It was clearly not working. It just ate all RAM and CPU usage was better even when without threads! Clearly not what expected.

I immediately raised a question to myself: why is it not working the way I expected? Some ideas, that came to my head were:

- I am simultaneously trying to launch 1000 threads without taking into consideration how many cores does CPU have. It results CPU changing context many many times and not performing actual calculations.

- RAM was filled with a lot of information from all 1000 threads. Since each thread created a list with a million integers, that took a lot of RAM.

- RAM couldn’t be freed up because no thread has ended it’s job and they still need that information.

P.S. These reasons might be way off point, I am still no expert at this. If you can confirm or deny my ideas, please let me know!

Second attempt: multiprocessing

After some googling, I found a script that just basically uses all cores of CPU to maximum. It used multiprocessing and I felt I have to try that. As always, you have unlimited access to code.

Code

I took a lesson from the attempt with threads and this time I take into consideration how many cores machine has.

import multiprocessing

import time

k = 1000 # Amount of tests

# Generates populations, takes a sample, calculates statistics

def run_experiment(sums):

pass

if __name__ == '__main__':

cores = multiprocessing.cpu_count()

manager = multiprocessing.Manager()

sums = manager.dict()

left = k

while left > 1:

# Lets see how many cores are free

diff = cores - len(multiprocessing.active_children()) - 1

if diff > 0:

# For every free core, launch a new experiment

for i in range(diff):

p = multiprocessing.Process(target=run_experiment, args=(sums, ))

p.start()

left -= diff

time.sleep(0.25)

# Lets wait for processes that are still doing their thing

while len(multiprocessing.active_children()) > 1:

time.sleep(1)

Results

Success! All cores are working, experiment is done within a few minutes.

Now I feel like a super developer! 🙂

Conclusions

The biggest takeaway from this experiment for me is that I still have a lot of ground to cover with threading. I already entered the forest, but there are many trees I have to get through to reach mastery. I still have to figure out actual difference between threads and processes. I have read about it but I don’t learn that way. I learn through doing. I need some more experiments to work on with asynchronous programming.

How you like asynchronous programming? What challenges do you face? Maybe you found it to be a chilly breeze? Let me know!